EventBridge Api Destination

This article walks through the event bridge Api Destinations and delves into some architectural abstractions when using the Api Destinations.

Eventbridge provides a highly available and scalable service bus that covers the Point to point and Fan-Out communication patterns, It integrates with a very wide range of AWS services and also provides a way of communicating with external systems and many well-known partners in the software industry.

Api Destinations

EventBridge API destinations allow you to send events to HTTPS endpoints., this means any public-facing and resolvable domain can be used as a destination to the Event Bridge Api Destination module.

Api destination supports all HTTP verbs except TRACE and CONNECT, so you can use all other verbs like GET, PUT, POST, PATCH, OPTIONS, and DELETE.

To use Api destination a Connection must be configured first, the connection is where we define the authorization mechanism.

The supported auth types:

OAuth

Api key

Basic ( username/password)

The following representation shows my understanding of Api destination ( any feedback will be appreciated )

Rest Api façade: is the entry point of Api Destinations and is triggered by a rule or pipe for any matched event.

Rate Limiter: when creating an API destination and setting an Invocation Rate Limit Per Second value, the API destination explicitly controls that Api Destination incoming throughput per second.

How exactly works rate limit is a big point, and till now i find no article or blog representing it correctly event Serverless Land visuals for invocation rate

Connection: The connection validates the authorization mechanism, the connection validate and prepare the auth config.

I would like just to know if this component is responsible for OAuth call or not, for other types Apikey and Basic just adding a header is sufficient but for OAuth a call is required, i'm curious about that.

Target Invoker: This is the name given by me and i just tried to decompose the whole process. so to be clear here i mean the module calling the target via HTTP.

Overview

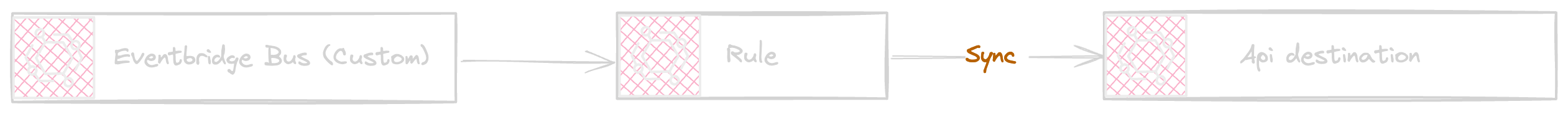

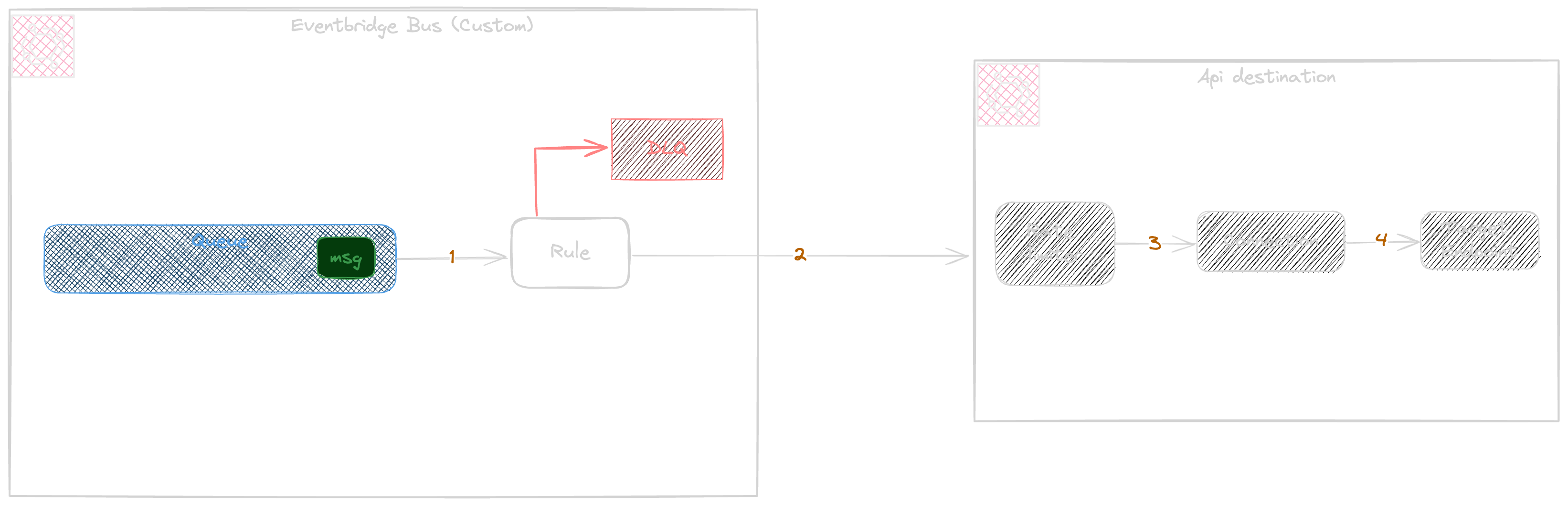

The Rest api receives the events over http and is used by Rule or pipe, the following diagram shows the Rule integration with api destination

The rule sends all matched events by calling Api destination synchronously and the rule will be acknowledged by the success of target.

Eventbridge default retry policy reattempts to send the event for a 24h period with a max retry count of 185 times. this way the eventbridge will do the best effort to have a chance for event delivery.

There is a possibility to add a Retry policy to customise the default retry configuration, the retry policy accepts maximum age of events to keep and the number of attempts in case of errors.

This is a good opportunity to avoid loose of events in case the target returns an error or has incapacity for reception of events.

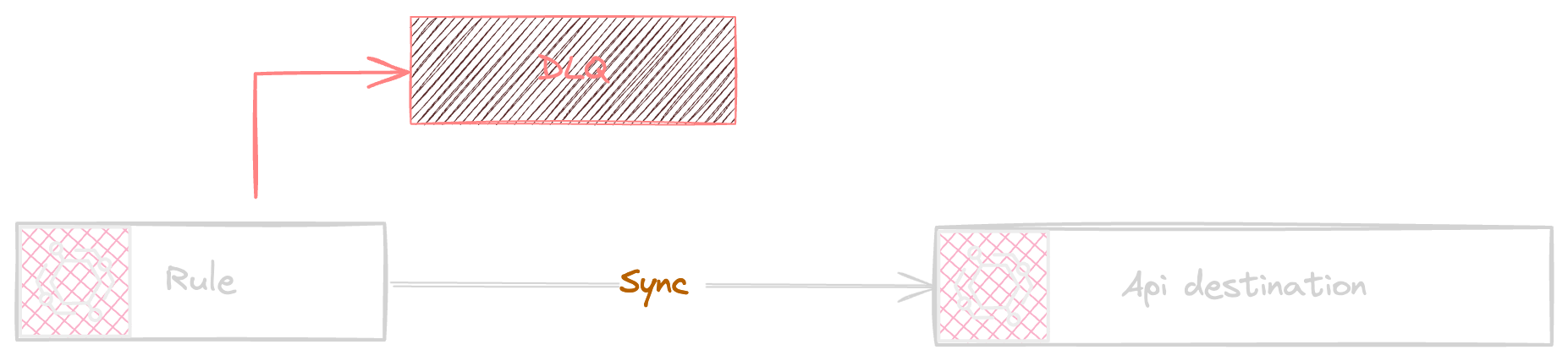

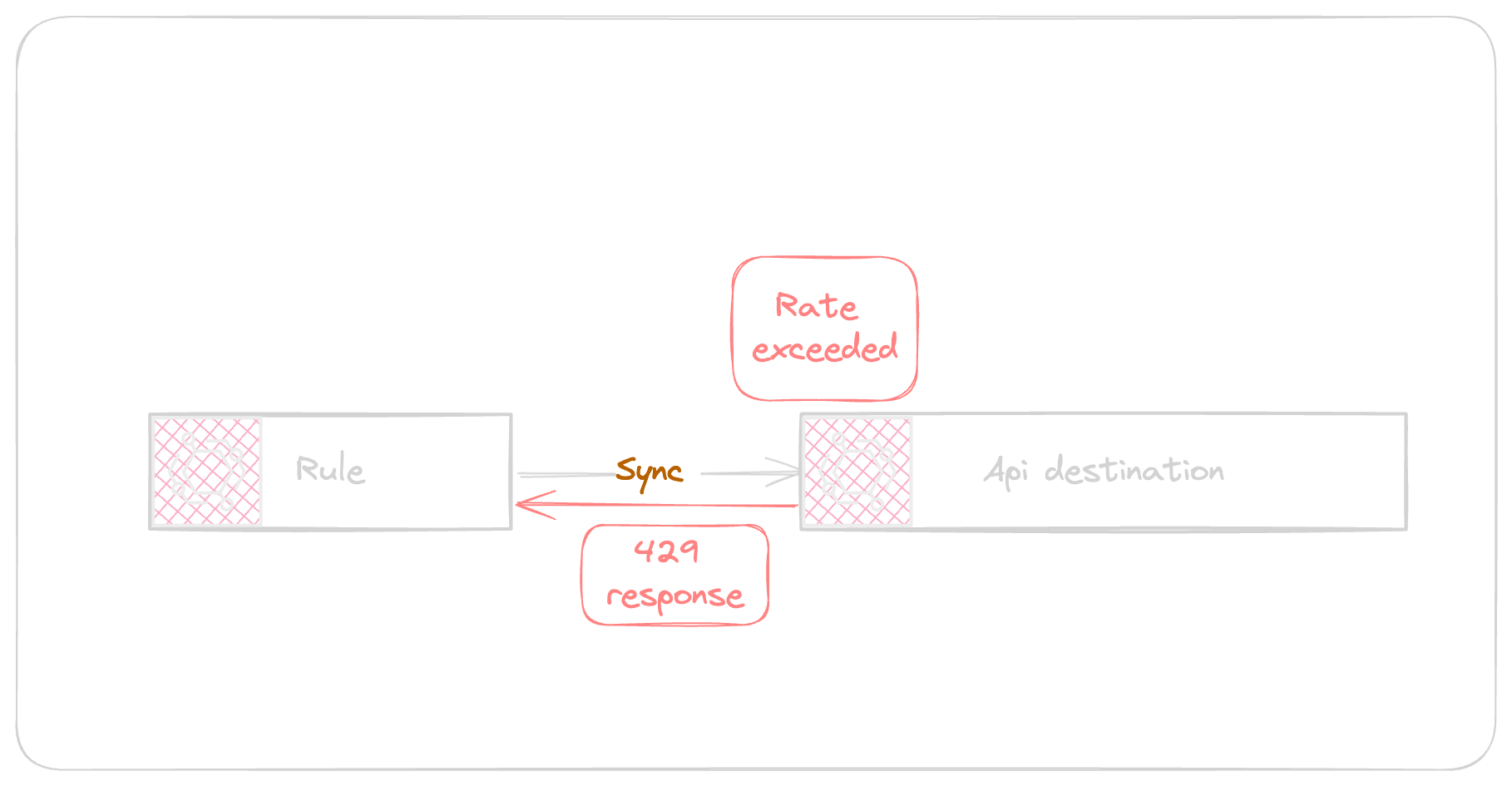

Api Destination as part of request validation verifies the Rate limit if the throughput exceeds the configured rate limit the api destination will throttle the requests as shown in the following diagram.

EventBridge and Rules are the abstract concepts on top of a queueing system.

The event navigation flow follows as demonstrated below

EventBridge event will be send to the rule

Rule sends the request to the Api destination via api call

The api validates the rate limiter status

The connection manages the auth per configuration

The target endpoint manage the external api endpoint via HTTP.

Source Code

Rate Limiting

This section is a brief recap of my own understanding after some discussions and research.

When you configure an API destination, you can specify a rate limit, which controls the maximum number of events per second that EventBridge will send to the destination endpoint. This rate limit helps prevent overwhelming the destination with a high volume of events.

Rate limit is based on token bucket algorithm. The rate limit is represented by the size of a token bucket and the rate at which tokens are replenished. Each event arrival consumes a token.

When the bucket becomes empty, the Api destination will throttle the requests that involves temporarily delaying or buffering some of the events until tokens become available again.

This is not yet clear ( No documentation or reference found ), but per tokenization algorithm the tokens are added into the bucket at a fixed rate, corresponding to the specified rate limit.

EventBridge continuously monitors the rate of incoming events for each API destination. It keeps track of the number of events received per second and compares it against the specified rate limit for that destination.

A Backoff Mechanism will be applied if the rate of incoming events consistently exceeds the specified rate limit. This means that EventBridge will gradually decrease the rate at which it sends events to the destination in order to alleviate the overload. Once the rate of incoming events decreases and falls below the specified limit, EventBridge will gradually resume sending events at the normal rate.

If EventBridge encounters errors while attempting to deliver events to the destination due to throttling or other issues, it may retry the delivery according to its retry policy. However, if the errors persist or if the destination consistently fails to handle the events, EventBridge may eventually stop attempting to deliver events to that destination and generate an error or warning notification.

Connection

A connection can be used as a Auth configuration blackbox, this means yous can choose your required auth type but Event Bridge will use a secret manager secret to register those credential securely.

Event bridge Basic and Api Key auth types are simple standards and the population of credentials and Http request header generation is managed.

The OAuth is based on client grant standard that is a standard to obtain credentials outside context of a user. When OAuth configured the Event bridge will communicate with OAuth base service providing the client_id and client_secret to obtain an access_token. As an access_token expires event bridge as per receiving an unauthorised response error being 401 or 407 will use the refresh_token to ask for a new access_token from OAuth server.

The practical usage

For the sake of demonstration, this section use two destinations to see in action how event bridge api destination behaves in action.

The example connection is simple and provides an Apikey auth type that will send the apikey under x-api-key header.

const connection = new Connection(this, Connection.name, {

authorization: Authorization.apiKey(

'x-api-key',

SecretValue.secretsManager(secret.secretArn)

)

});

And the Connection will be used with Api destination

Please replace a new webhook.site url by navigating to the https://webhook.site and place it in cdk stack as a replacement for webhooksiteUrl const variable here and also here

const apiDestination = new ApiDestination(this, 'api-destination', {

httpMethod: HttpMethod.POST,

endpoint: props.apiUrl!,

connection: connection,

rateLimitPerSecond: 1,

});

The repository provides a fake event json file that let send the events to the bus as batch of events

npm run events:send

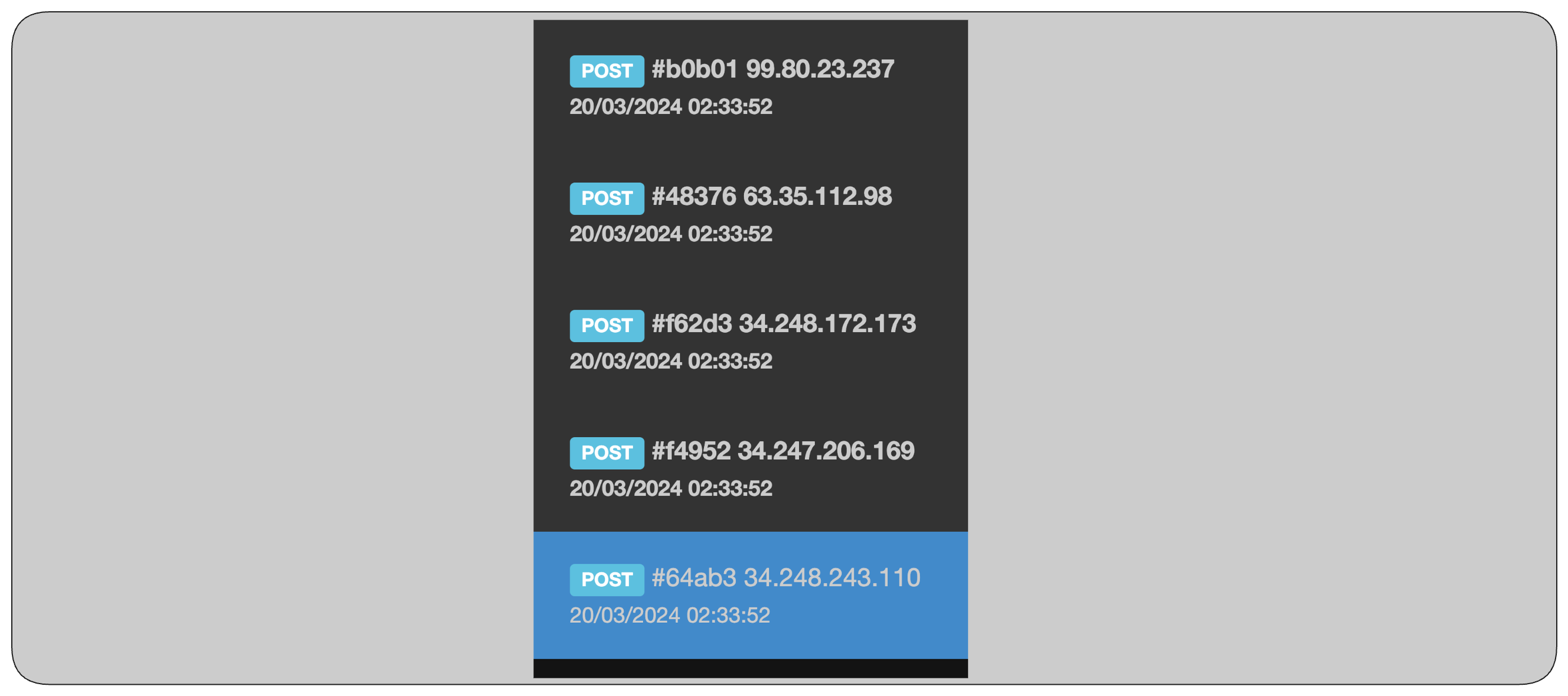

The api destination has a rate limit of 1 RPS, This means the api destination will receive the event from rule and in case of more than 1 request per second the request will be throttled at the Rule / Api Destination edge and this leads to prevent overwhelming the target.

Looking at the webhook.site and looking in reception time preciously, as per following figure the events are reaching target.

If the throttling errors cause the event to reach the DLQ the message attributes shows the RETRY_ATTEMPTS and ERROR_MESSAGE shows the Api Destination Message indicating the reason of failure including target response payload.

EventBridge guarantees the rate limit control at a 'Best Effort' and does not guarantee the exact, because rates are not globally shared across the fleet and asynchronously propagated. It s important to remind that Event bridge is a distributed service and keeping state consistency without having increased latency can be hard to achieve and that is why the Rate limits are not tracked correctly especially low TPS.